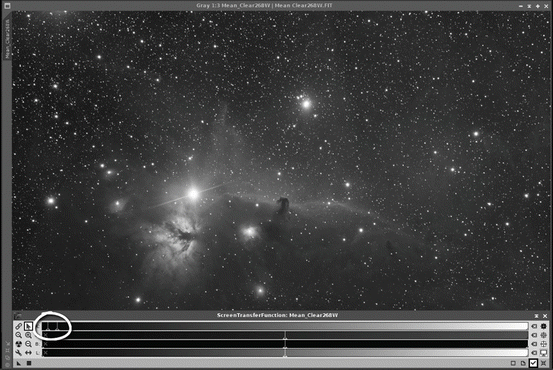

The same data scaled up (stretched by 4x). Even if we scale it up by a factor of 4, you may still not be all that impressed.

When mapped to your computer’s display it has approximately one-quarter of the brightness range that it should have. Pretty dark, isn’t it? That’s because it’s 14-bit data displayed in 16-bit containers like I talked about last month.

(Let's skip the fact that the un-manipulated data isn’t in color - we'll talk about that some other time.) Here’s a colorized image from a well-exposed daytime shot right off my Canon DSLR: Raw 14-bit data unscaled is pretty dark, even for a daylight image!

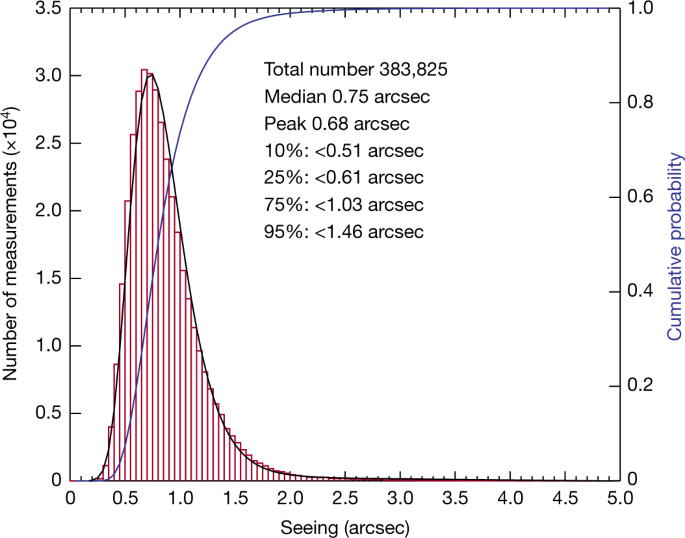

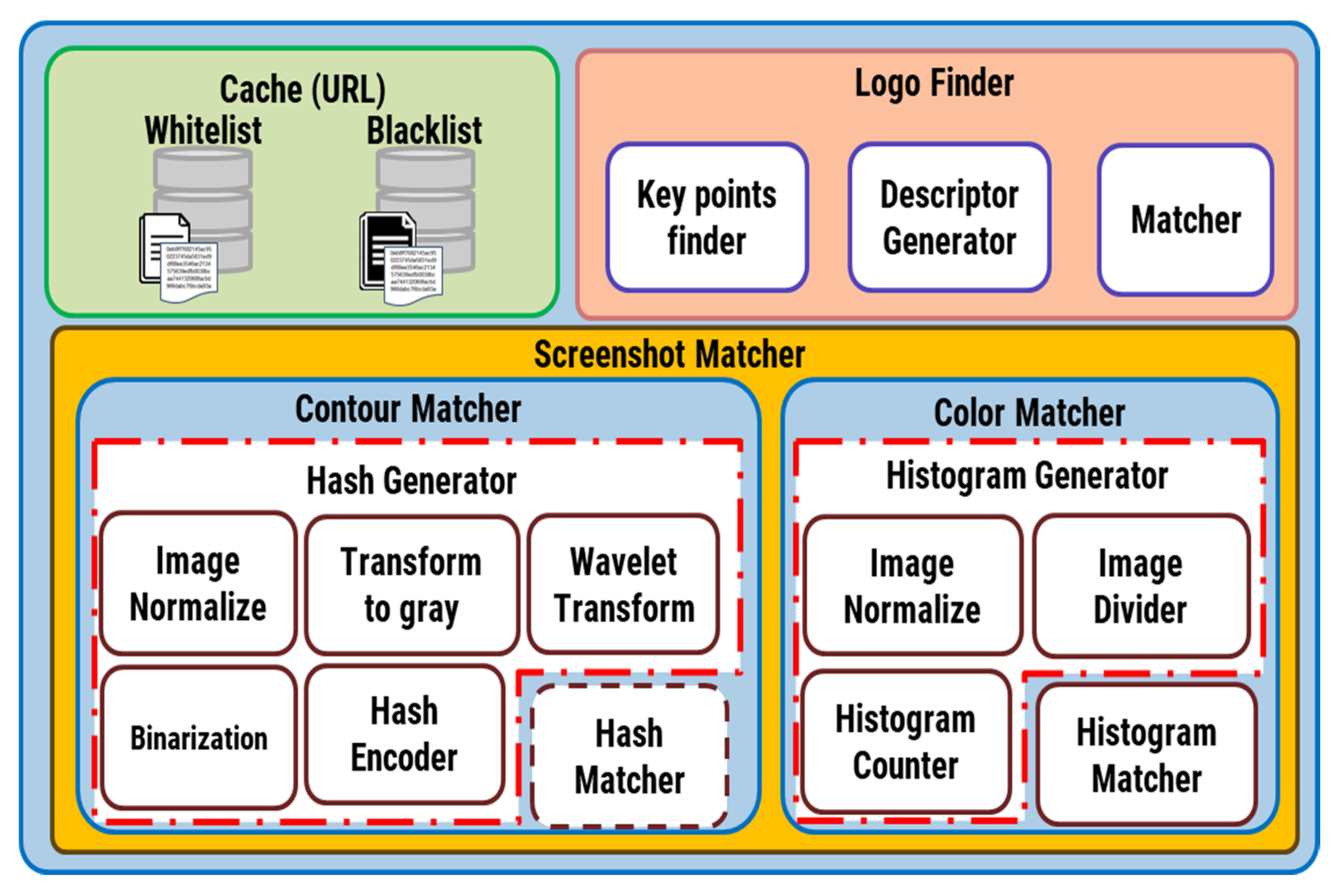

#Atronomy histogram maker software

Almost all software stretches data just to show it on a screen. In fact, it's surprisingly hard to display a RAW image from a DSLR camera without any adjustments. Otherwise, I'd start an argument, which is much like the one I had with my daughter when she was six and insisted that the Moon followed us home from grandmas, because she saw it with her own eyes. As a computer science professional and graphics expert, I just roll my eyes when I hear this. Or, when you manipulate the data from the camera, you’re not getting a true representation of the object. Now, there are those who say that once you’ve stretched the data, you’ve destroyed it. For example, images from a DSLR camera range from 0 to 16,384 (14-bit), and that can be scaled by multiplying by 4 to cover the 16-bit range (0 to 65,535) for image processing, or it’s divided by 64 to make it fit the 8-bit range (0 to 255) that can be represented by your computer display. Last month I talked about how data from a 12- or 14-bit camera might be scaled to fill the 16-bits of data range available for manipulation by image-processing software.

What does it mean to stretch your image? Simply put, stretching means scaling your data. There's more than one way to stretch your data.